FoH Focus Group in Czechia Highlights the Human Role in AI-Supported Healthcare

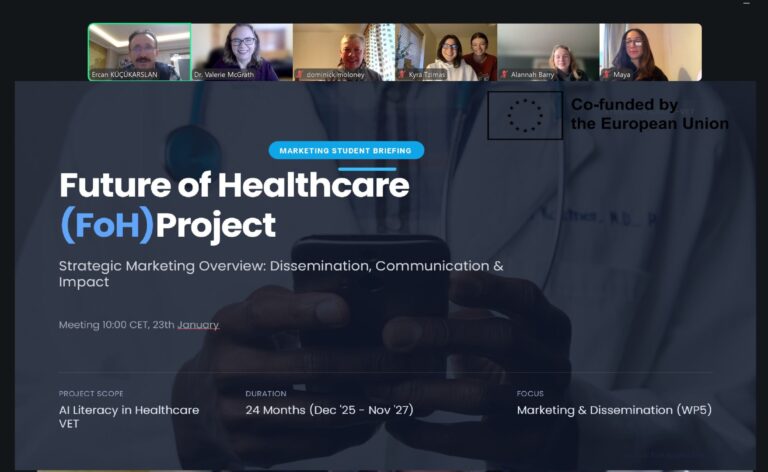

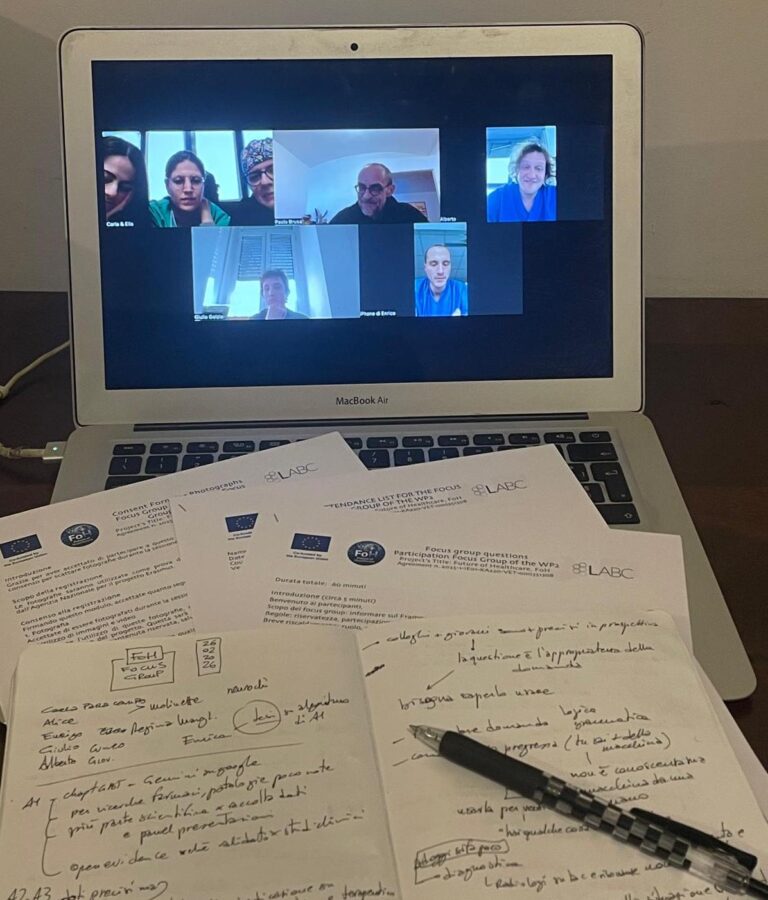

As part of the FoH – Future of Healthcare Erasmus+ project, a focus group was successfully organised in Czechia by Vysoká škola zdravotnická (Medical College, Prague). The session brought together healthcare professionals, academics, and paramedics to explore the role of Artificial Intelligence (AI) in healthcare education and clinical practice.

The discussion provided valuable insights into how AI is currently perceived and used, as well as the competencies, ethical considerations, and communication challenges associated with its integration into healthcare systems.

AI Use in Education and Clinical Contexts

Participants highlighted that AI is currently used primarily in educational and research settings, supporting tasks such as content creation, learning materials development, and information retrieval. Tools such as ChatGPT, Gemini, and other AI-based platforms are increasingly part of academic workflows.

In clinical environments, AI is gradually being introduced through diagnostic support systems, medical documentation tools, and risk assessment applications. However, participants emphasized that direct reliance on AI in patient care remains limited, mainly due to concerns about reliability, transparency, and validation of outputs.

Ethics, Responsibility, and Human Oversight

A central theme of the discussion was the importance of ethical responsibility and clear accountability in AI-supported healthcare. Participants expressed concerns regarding data protection, legal responsibility, and the lack of clear regulatory frameworks.

There was strong agreement that responsibility should be shared among healthcare professionals, institutions, and AI developers, while maintaining a clear principle: final clinical decisions must always remain under human control.

Participants also identified specific areas where AI should never replace human judgement, including diagnosis, treatment decisions, and communication with patients—especially in sensitive or complex situations.

A central theme of the discussion was the importance of ethical responsibility and clear accountability in AI-supported healthcare

FoH team

Key Takeaways

• AI is widely used in education and knowledge support, but still limited in direct clinical decision-making

• Trust in AI remains low due to lack of transparency and validation

• Healthcare professionals require basic AI literacy and critical evaluation skills

• Ethical concerns focus on data privacy, responsibility, and patient safety

• Human oversight and professional judgement must remain central in AI-supported healthcare

• Communication, empathy, and trust are essential in maintaining patient relationships

The Czechia focus group reinforced a key principle of the FoH project: AI should enhance healthcare, but never replace the human role at its core. Participants emphasized that successful integration of AI depends not only on technical competencies, but also on ethical awareness, critical thinking, and strong communication skills. This holistic perspective will play a crucial role in shaping the FoH Framework and future training modules, ensuring that healthcare professionals are equipped to use AI responsibly while preserving trust, safety, and human-centred care.